Let’s immerse ourselves in the realm of load balancing and explore some of the most widely adopted techniques used to distribute incoming requests efficiently. These techniques have demonstrated their effectiveness in preventing any one server from being overwhelmed, thus ensuring the judicious allocation of requests across multiple servers.

- Round Robin Technique. The Round Robin technique entails the load balancer distributing requests sequentially from the first server to the nth server before circling back to the beginning. Essentially, it traverses all servers in a set order, whether from top to bottom or vice versa. By employing this method, requests are evenly distributed across multiple servers, ensuring an equitable distribution of the workload.

In the diagram above, four servers handle incoming requests. The round-robin technique ensures requests are assigned to each server in a sequential manner, thereby preventing overloading of any single server. This approach facilitates workload balance and prevents any server from becoming overwhelmed.

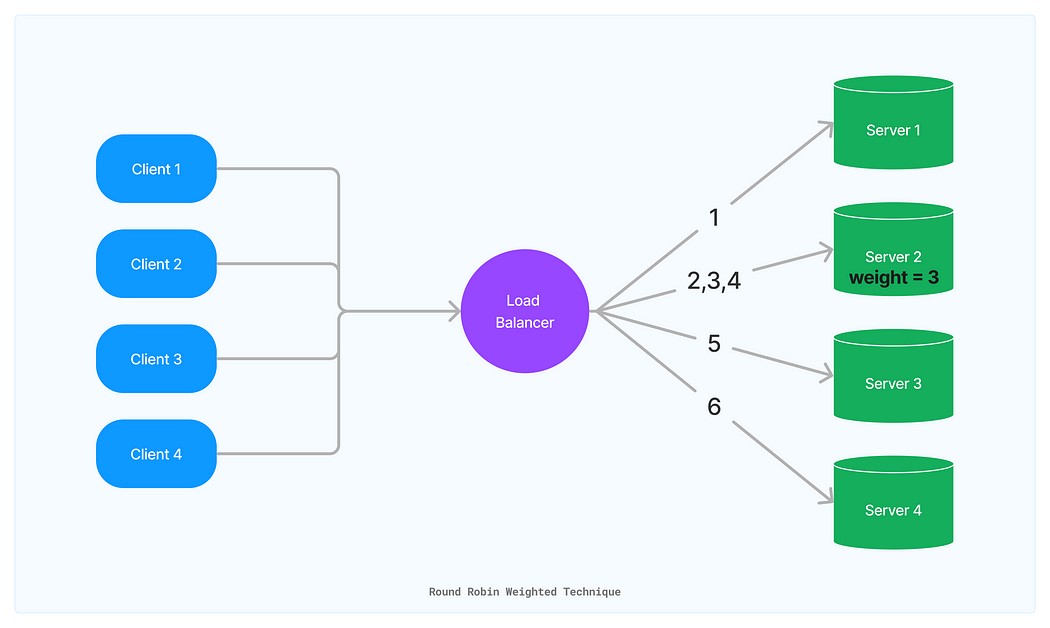

- Round Robin Weighted Technique. This technique follows a similar approach to Round Robin but introduces a weight attribute. The weight attribute allows you to specify that a particular server can efficiently handle multiple requests per second. By utilizing weighted attributes, traffic can be distributed based on the server’s capacity.

In the depicted scenario, four servers are responsible for incoming requests. However, the second server is the most efficient, capable of handling three requests per second. To account for this efficiency gap, the second server is assigned a weight of three, while the others retain a weight of one. This enables the load balancer to allocate requests considering each server’s relative capabilities, leading to more efficient resource utilization and overall performance enhancement.

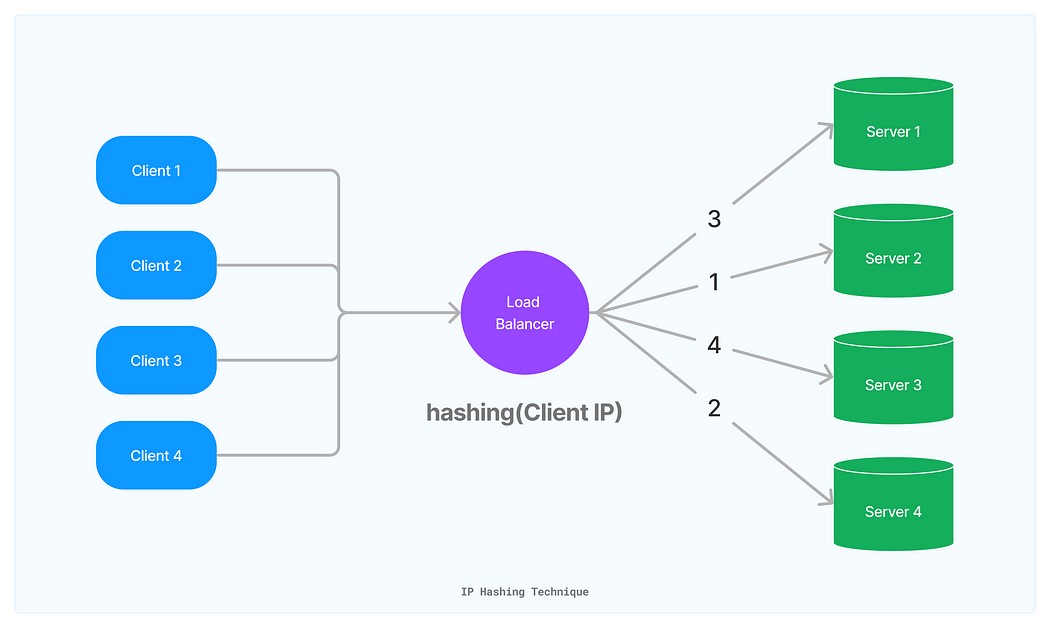

- IP Hashing-Based Distribution. In this technique, the client’s IP address from incoming requests serves as a hash key to route traffic to the same server as long as that server remains available. This approach is known as IP Hashing-based distribution, and various algorithms are designed to implement it.

As illustrated in the diagram, the load balancer employs IP hashing, using the client’s IP to create a unique hash key. Consequently, the request consistently reaches the same server whenever it encounters the load balancer because the hash of the same value will always yield the same result. This technique proves particularly valuable when caching occurs on the same server, ensuring requests consistently hit the same server, increasing cache hits.

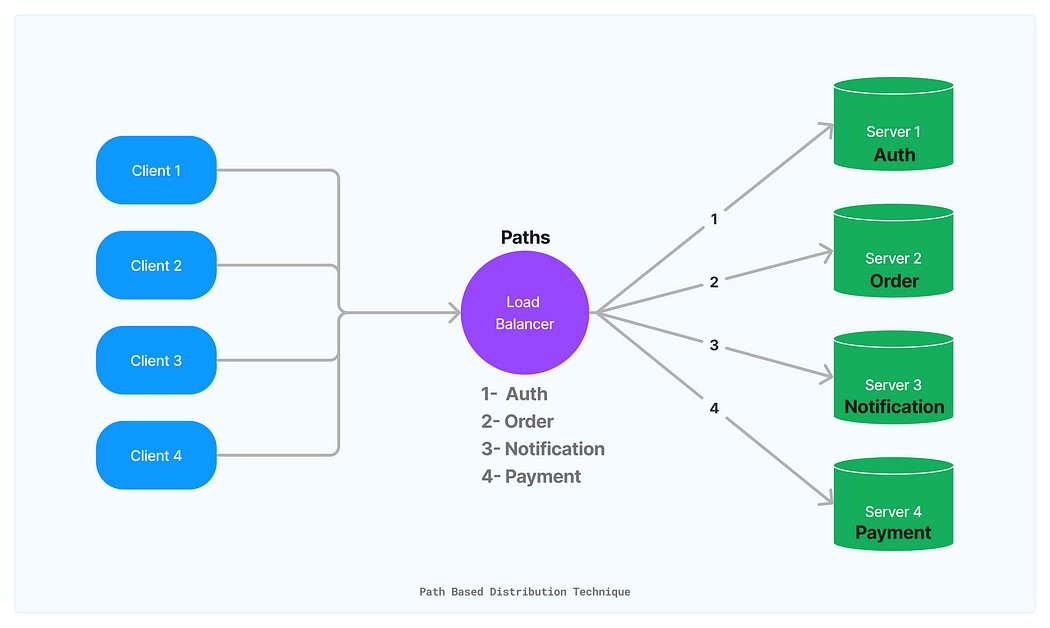

- Path-Based Distribution Technique. Using this technique, the load balancer directs requests to servers based on the path of the requests. By deploying services to different servers and configuring their paths in the load balancer, you can ensure that specific requests always reach the designated server.

This approach guarantees the effective and uninterrupted operation of your system, even in the face of potential disruptions. By leveraging this technique, you can enhance the overall reliability and resilience of your system, delivering a superior user experience.

In this article, we have explored various techniques for managing server traffic, each with its own unique use cases. While there are numerous other techniques available, these common and highly effective approaches should suffice for most scenarios. Ultimately, the choice of technique depends on the specific requirements of your application.

If you have any questions or would like to know more – do not hesitate to contact Skynix for a free consultation.